Implementing AI risk management and transparency to align with comprehensive regulations like the EU AI Act can be challenging for organizations of any size.

Trusted AI understands these challenges. We are helping clients across verticals to harness the value of AI by De-Risking AI early in their adoption journey.

These include the risks of fines posed by current and new regulations, as well as Security, Privacy, Transparency, Explainability, Accountability and Trustworthy AI risks these guidelines are enacted to prevent.

Enterprises will need to earn the trust of customers and regulators globally, the EU AI Act will be applicable to those in EU and outside of EU.

With our Responsible AI/Trustworthy AI Governance Service, you can prepare for the forthcoming EU AI Act by:

- Staying up-to-date with the EU AI Act, ensuring your systems follow existing and emerging compliance needs.

- Categorizing your AI Systems to identify your risk categories (prohibited , high, limited, or no risk)

- Create an actionable plan to get into compliance with a readiness assessment and tailored checklist for each AI System.

- Training across Organization on readiness and preparation for Trustworthy/Responsible AI.

What is the EU AI Act?

On March 13, 2024, the European Parliament approved the world’s most comprehensive legislation on artificial intelligence. This marks a turning point for AI, after a year of debate about the potential harms to society as this incredible technology unfolds.

With the final EU AI Act approved, Trusted AI is thrilled to unveil a simplified Strategic, EU AI Act Service to formalize Governance, Risk, and Compliance for AI.

We are partnering with the EU Standards body and incorporating that knowledge for our client’s advantage.

What is Trusted AI EU AI Act Service

AI Risk and Governance is complex.

We are providing expertise on a laser focused and skilled service that simplifies and integrates directly with your organization’s existing processes to set the stage for EU AI Act compliance as well as leveraging that for business advantage.

This regulation consists of a comprehensive set of rules for providers and users of AI systems, which details what transparency and reporting obligations each entity has when placed on the EU market. The requirements in the EU AIA will apply not only to European companies but to all AI systems impacting people in the EU, including any company placing an AI system on the EU market or companies whose system outputs are being used within the EU (regardless of where systems are developed or deployed).

Establish Trustworthy and Safe AI systems with our streamlined Process creation and Review. We partner with technology providers to scale your AI compliance and AI risk management.

Benefits: Organizations will be able to innovate with speed, operate efficiently, and lead effectively in Artificial intelligence.

Fines for non compliance:

- Up to 7% of global annual turnover or €35m for prohibited Al violations.

- Up to 3% of global annual turnover or €15m for most other violations.

- Up to 1.5% of global annual turnover or €7.5m for supplying incorrect info Caps on fines for SMEs and startups.

Contact Us

What should businesses be doing?

As an organization building or using AI systems, you will be responsible for ensuring compliance with the EU AI Act and should use this time to prepare.

Compliance obligations will be dependent on the level of risk an AI system poses to people’s safety, security, or fundamental rights along the AI value chain.

The AI Act applies a tiered compliance framework. Most requirements will be on AI systems being classified as “high-risk”, and on general-purpose AI systems (including foundation models and generative AI systems) determined to be high-impact posing “systemic risks”.

Depending on the risk threshold of your systems, some of your responsibilities could include:

- Conducting a risk assessment to determine the level of risk associated with your AI system.

- Providing conformity assessment, stating that your system has been self-assessed using EU approved technical standards or that it underwent third-party assessment by an accredited body within the European Union.

- Maintaining appropriate technical documentation and record-keeping.

- Providing transparency and disclosure about your AI system as follows:

- Prohibited – No transparency obligations, need to be removed from the market.

- High-risk: Register high-risk AI systems on the EU database before placing them on the market.

- Limited-risk: Inform and obtain the consent of people exposed to permitted emotion recognition or biometric categorization systems. Disclose and clearly label where visual or audio “deep fake” content has been manipulated by AI.

- Minimal-risk – No transparency obligations.

- Ensuring that your AI system complies with the specific requirements for its level of risk when there are substantial modifications to the system’s intended purpose by the original provider or a third party.

Complexity of compliance:

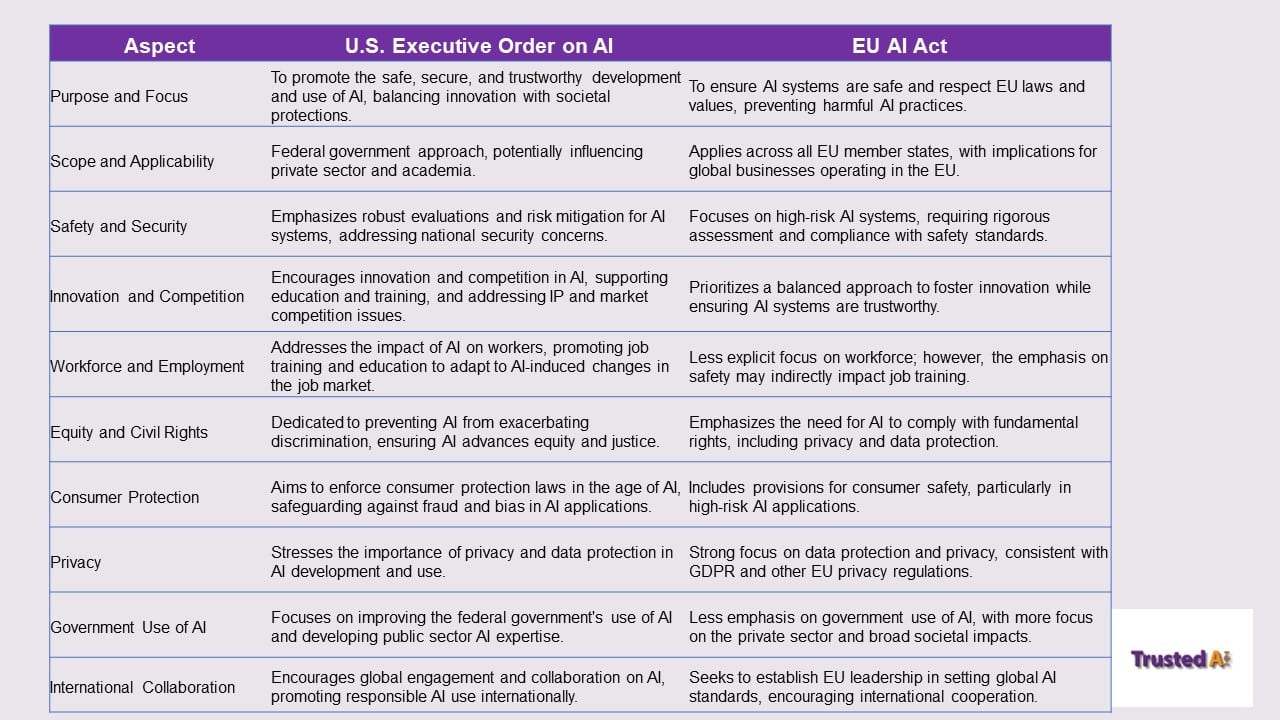

The Biden administration, in the US, issued an Executive Order on “safe, secure, and trustworthy development and use of artificial intelligence”. They encouraged the FTC to expand its regulatory activities in AI. On November 21, 2023, the Federal Trade Commission (FTC) approved a resolution to facilitate the issuance of Civil Investigative Demands (CIDs) in AI-related investigations. This 10-year resolution allows streamlined issuance of these subpoenas, while the FTC retains ultimate control over their use. This action aligns with the FTC’s broader strategy under Chair Lina Khan to enhance its regulatory authority in the AI sector.

The FTC’s move reflects a growing focus on the potential risks AI poses to consumers, acknowledging both its benefits and possible misuses, such as deception or discrimination further committing to protect individual rights against violations through advanced technologies.

The FTC’s new resolution positions it to more vigorously investigate AI companies for illegal or unfair practices, underlining its ambition to become a leading AI regulator. The FTC has already initiated such actions

Do you know the key to developing a compliance strategy that aligns with both?